Menu

|

Before we start with the Hadoop Setup Process on Ubuntu Linux for Single Node cluster, Let us understand in brief

Of course, you don’t need to install Java 10 on your Debian 9 machine yourself if you have a high-speed Debian VPS hosted with us – in which case, you can contact our team of Linux experts who can install and configure either version of Java for you. They are available 24/7 and can help with any requests or questions that you may have. The LAMP server is the cornerstone of Linux web hosting. In the early days of dynamic web content LAMP was what won Linux the crown in the web space, and it still is responsible for powering a very large portion of the Internet's sites. If you're looking to set up a LAMP stack to host your website.

What is Hadoop?

Apache Hadoop as the name suggests is part of the Apache Project. It is free, java based framework which is used to store and analyse data using commodity hardware via distributed computing environment.

As mentioned in my previous blog Introduction to Big Data there are many solutions to store and analyse big data for example MPP (Massively Parallel Processing ) databases, NoSQL databases like Mongo DB, Apache Cassandra etc.

Why use Hadoop?

Hadoop runs on commodity hardware, thereby making it cost effective.

Hadoop also takes care of fault tolerance by replicating data so that it can be recovered in the event of failures.

Most Importantly, Hadoop can manage Structured, Semi-Structured as well as Unstructured data, thus making it Flexible.

Hadoop also takes care of less network usage and can handle very large data sets thus making it Scalable in true sense.

Basic Linux shell Commands (Linux users can skip this part)

Let us have an overview of some basic linux shell commands which are required for the Hadoop installation process, this will be helpful for non-linux users.

Please make a note that there are various options available with each command mentioned here. Also, the command can be used in several other contexts but I have listed only those command options which are required for the Hadoop setup.

Hadoop Architecture in brief

Though I will be discussing about Hadoop Architecture in detail in my next post, the installation requires some basic knowledge of what type of installation we are performing and what are the components of this architecture.

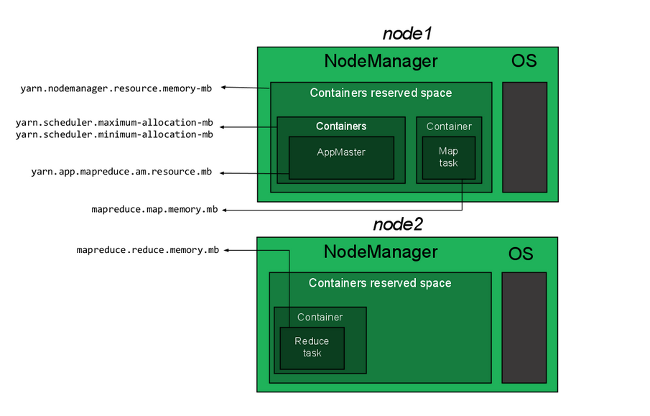

The two important components of Hadoop are as explained below,

Hadoop Installation on Ubuntu Linux.

There are 3 modes of Installing Hadoop

1) Local Mode : Hadoop is by default configured to run on a standalone mode as a single java process too. In this case there are no daemons running, which means there is only one JVM instance that runs. Also note that HDFS is not used here. In this case only the JAVA_HOME needs to be set for configuration.

2) Pseudo Distributed : The Master (Name Node, Job Tracker) and the Slaves (Data Nodes + Task Trackers) are all running on a single machine. These Hadoop daemons can run on one single machine to simulate a cluster on a small scale. This is also known as a single cluster and is generally useful for Developers and Testers.

3) Fully Distributed : All the Hadoop daemons run on a separate machine i.e. The Master (Name Node, Job Tracker) and the Slaves (Data Nodes + Task Trackers) are configured on different machines respectively.

I will be guiding the steps to install a Pseudo Distributed Single Node Hadoop cluster.

A) Java Installation : Since Apache Hadoop framework is written in Java Programming language, we need to install JDK.

# Update the source list using the apt-get update command.

poonam@Latitude$ sudo apt-get update

# Install Sun Java 7 JDK

poonam@Latitude$ sudo apt-get install openjdk-7-jdk

A.1) Quick Check : After installing JDK, check the Java Version

java version “1.7.0_95”

OpenJDK Runtime Environment (IcedTea 2.6.4) (7u95-2.6.4-0ubuntu0.14.04.1)

OpenJDK 64-Bit Server VM (build 24.95-b01, mixed mode)

B) Adding a dedicated user

Though this step is not compulsory, I follow it so as to keep my Hadoop installation separate from other software applications. So we will create a new user “hduser” and a new group “hadoop”, we will add this hduser to the hadoop group using the addgroup and the adduser commands.

poonam@Latitude$ sudo addgroup hadoop

poonam@Latitude$ sudo adduser –ingroup hadoop hduser

Switch user

poonam@Latitude$ su -hduser

C) Installing and Configuring SSH

Hadoop uses Shell (SSH) to communicate with the slave nodes. It requires a password-less SSH connection between the master and all the slaves because if ssh is not password-less, you have to go on each individual machine and start all the processes there, manually in case of a fully distributed cluster. Therefore even in the Pseudo distributed single node cluster we will need to configure the SSH access and keep the connection password-less otherwise we will be prompted very frequently for entering the password. To make it password-less we need to configure the SSH access to localhost for the hduser.

Install SSH using the following commands

hduser@Latitude$ sudo apt-get install ssh

hduser@Latitude$ sudo apt-get install sshd

hduser@Latitude$ ssh-keygen -t rsa -P “”

Generating public/private rsa key pair.

Enter file in which to save the key (/home/hduser/.ssh/id_rsa):

Created directory ‘/home/hduser/.ssh’.

Your identification has been saved in /home/hduser/.ssh/id_rsa.

Your public key has been saved in /home/hduser/.ssh/id_rsa.pub.

You will see something similar on the console , this is just an example and not the actual key fingerprint.

Example: The key fingerprint is:

d2:65:43:bd:b0:cb:b1:c5:7e:39:f6:1d:1e:6e:a7:bd hduser@Latitude The key’s randomart image is: +–[ RSA 2048]—–+ | .. | | …. | | =+.. | | oo+ | | S. * . | | + . =o | | ooo+| | =+| | oE+| +—————–+

Now this will create an RSA Key Pair with an empty password. This is generally not recommended but as discussed above we would need a password-less SSH access to avoid entering the password every time Hadoop communicates with its nodes.

hduser@Latitude:$ cat $HOME/.ssh/id_rsa.pub >> $HOME/.ssh/authorized_keys

We can check if ssh is working properly,

hduser@Latitude:$ ssh localhost

The authenticity of host ‘localhost (::1)’ can’t be established.

RSA key fingerprint is c6:56:35:67:be:03:00:da:1c:95:2f:aa:33:t9:36:26.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added ‘localhost’ (RSA) to the list of known hosts.

D) Disabling IPv6

Why do we disable IPv6 for Hadoop?

1) As stated in Apache Hadoop Wiki

Apache Hadoop is not currently supported on IPv6 networks. It has only been tested and developed on IPv4 stacks. Hadoop needs IPv4 to work, and only IPv4 clients can talk to the cluster.If your organisation moves to IPv6 only, you will encounter problems. Some Linux releases default to being IPv6 only. That means unless the systems are configured to re-enable IPv4.

2) As we are setting up a Pseudo distributed single node cluster i.e. all the masters and slaves will be residing on one machine, in this case we are not connecting to any IPv6 network, thus there is no point in enabling IPv6 here.

To disable IPv6 on Ubuntu 10.04 LTS, open /etc/sysctl.conf in any editor and add the following lines to the end of the file:

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1

Reboot the machine in order to make these changes take effect. You can check whether IPv6 is disabled on your machine with the following command:

hduser@Latitude:$ cat /proc/sys/net/ipv6/conf/all/disable_ipv6

A return value of 1 means IPv6 is disabled.

E) Install Hadoop

Download Hadoop from the Apache download mirrors

The best practice is to keep the Hadoop installation to the /usr/local/hadoop directory, it may vary as per your choice.Make sure to change the owner of all the files to the hduser user and hadoop group.

hduser@Latitude:$ cd /usr/local

hduser@Latitude:$ sudo tar xzf hadoop-1.0.3.tar.gz

hduser@Latitude:$ sudo chown -R hduser:hadoop hadoop

F) Setup Configuration Files for Pseudo Distributed Single Node cluster

The following files will have to be modified to complete the Hadoop setup:

1. Update ~/.bashrc:

Assuming that you are using the bash shell,

Add the following lines to the end of the $HOME/ .bashrc file of user hduser.

# Set Hadoop-related environment variablesexport HADOOP_HOME=/usr/local/hadoop

# Add Hadoop bin/ directory to PATHexport PATH=$PATH:$HADOOP_HOME/bin

2. hadoop-env.sh

To configure hadoop we have to set the Java environment variable i.e. JAVA_HOME.

The hadoop-env.sh file contents will be

# The java implementation to use. Required.

# export JAVA_HOME=/usr/lib/j2sdk1.5-sun

change the contents to

# The java implementation to use. Required.

export JAVA_HOME=/usr/lib/jvm/java-7-sun

3. core-site.xml

The core-site.xml file contains configuration properties that Hadoop uses when starting up. Before we edit this file, we will have to create a “tmp” directory with the path “/app/hadoop/tmp” and change the owner of this “tmp” directory to hduser and group to hadoop

hduser@Latitude:$ sudo chown -R hduser:hadoop tmp

Now add the following code to core-site.xml

<property>

<name>hadoop.tmp.dir</name> <value>/app/hadoop/tmp</value> <description>A base for other temporary directories.</description> </property> <property> <name>fs.default.name</name> <value>hdfs://localhost:54310</value> <description>

The name of the default file system. A URI whose scheme and authority

determine the FileSystem implementation. The uri’s scheme determines

the config property (fs.SCHEME.impl) naming the FileSystem

implementation class. The uri’s authority is used to determine the host,

</description>

</property>

4. Add the following code to conf/mapred-site.xml

<property>

<name>mapred.job.tracker</name> <value>localhost:54311</value> <description>

The host and port that the MapReduce job tracker runs at.

If “local”, then jobs are run in-process as a single map and

</description>

</property>

5. Add the following code to conf/hdfs-site.xml

<name>dfs.replication</name>

<description>

Default block replication. The actual number of replications can be

specified when the file is created. The default is used if replication is

</description>

This is the first step to starting up Hadoop. This requires you to format the HDFS (Hadoop Distributed File System) which rests on top of your local filesystem of your “cluster”.

You need to do this the first time you set up a Hadoop cluster.

Do not format a running Hadoop filesystem as you will lose all the data currently in the cluster (in HDFS)!

To format the HDFS :

hduser@Latitude:~$ /usr/local/hadoop/bin/hadoop namenode -format

H) Finally, Starting up your Pseudo-Distributed Single-Node Cluster

Run this command

hduser@Latitude:~$ start-all.sh

If at all you get an error “start-all.sh: command not found” then run the command given below,

hduser@Latitude:~$ /usr/local/hadoop/bin/start-all.sh

To check if the installation was successful, type in the following command

hduser@Latitude:~$ jps

The output will look like this

2149 JobTracker

2085 SecondaryNameNode

1788 NameNode

There will be in all 6 daemons running with the names TaskTracker, JobTracker, DataNode, SecondaryNameNode, Jps and NameNode. If any of these daemons is missing, then the installation has gone wrong some where and needs to be debugged.

To stop the cluster

hduser@Latitude:~$ stop-all.sh

If at all you get an error “stop-all.sh: command not found” then run the command given below,

hduser@Latitude:~$ /usr/local/hadoop/bin/stop-all.sh

These are the steps required to setup your own single node cluster.

In case of any queries, concerns or suggestions please comment or write to [email protected]

References :

Advertisements

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed